Workbook Agent

This feature requires enabling the Workbook Agent setting in AI settings.

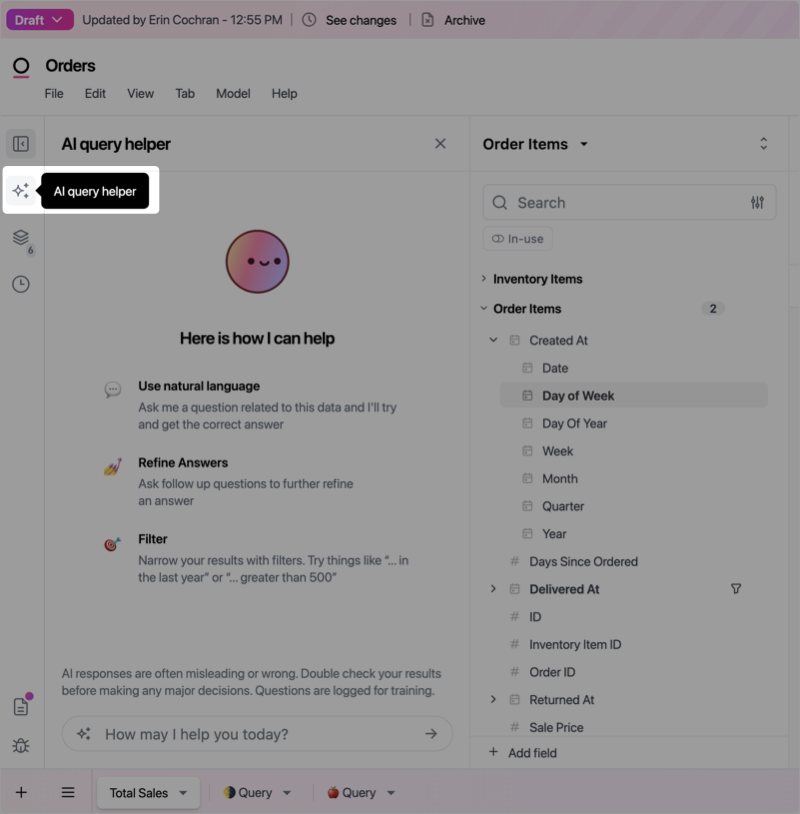

Generating queries

In a document draft, click the ✨ (three stars) in the left side navigation. A panel will open next to the field picker with some tips about how to use the feature, as well as a text box to ask your first question.

-

Iterate on the questions you ask to provide a more refined result. For example:

Note: Closing the Workbook Agent will delete the text history.

- Add totals to results, such as adding a column total that sums a Sale Price measure

- Create new measure fields, such as a Sale Price Average

-

Attach images by pasting from your clipboard to provide visual context (for example, pasting a screenshot of a chart you want to analyze)

To attach an image, copy it to your clipboard and paste it directly into the Workbook Agent text input. The image appears as a file chip, ready to be sent with your message.

The Workbook Agent > File uploads AI setting must be enabled to use this feature.

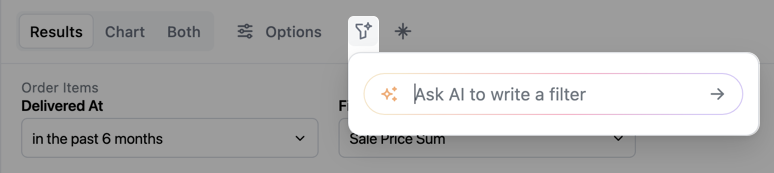

Creating filters

Make filtering easier by having the agent create filters for you using natural language. You can describe a filter like Only include orders created on a Saturday or Exclude customers with a status of deactivated and the agent will automatically apply the filter to the query. To add an AI filter to your query:- Use the Workbook Agent window, or

-

Click the starred funnel icon next to the Options button in the Results tab:

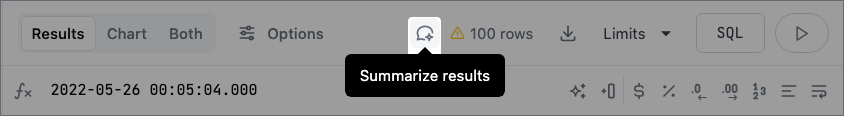

Summarizing query results

This feature requires enabling the Workbook Agent setting in AI settings.

- Describe any anomalies in the data

- Identify insights into trends

- Detail the next steps you should take

View full text response

View full text response

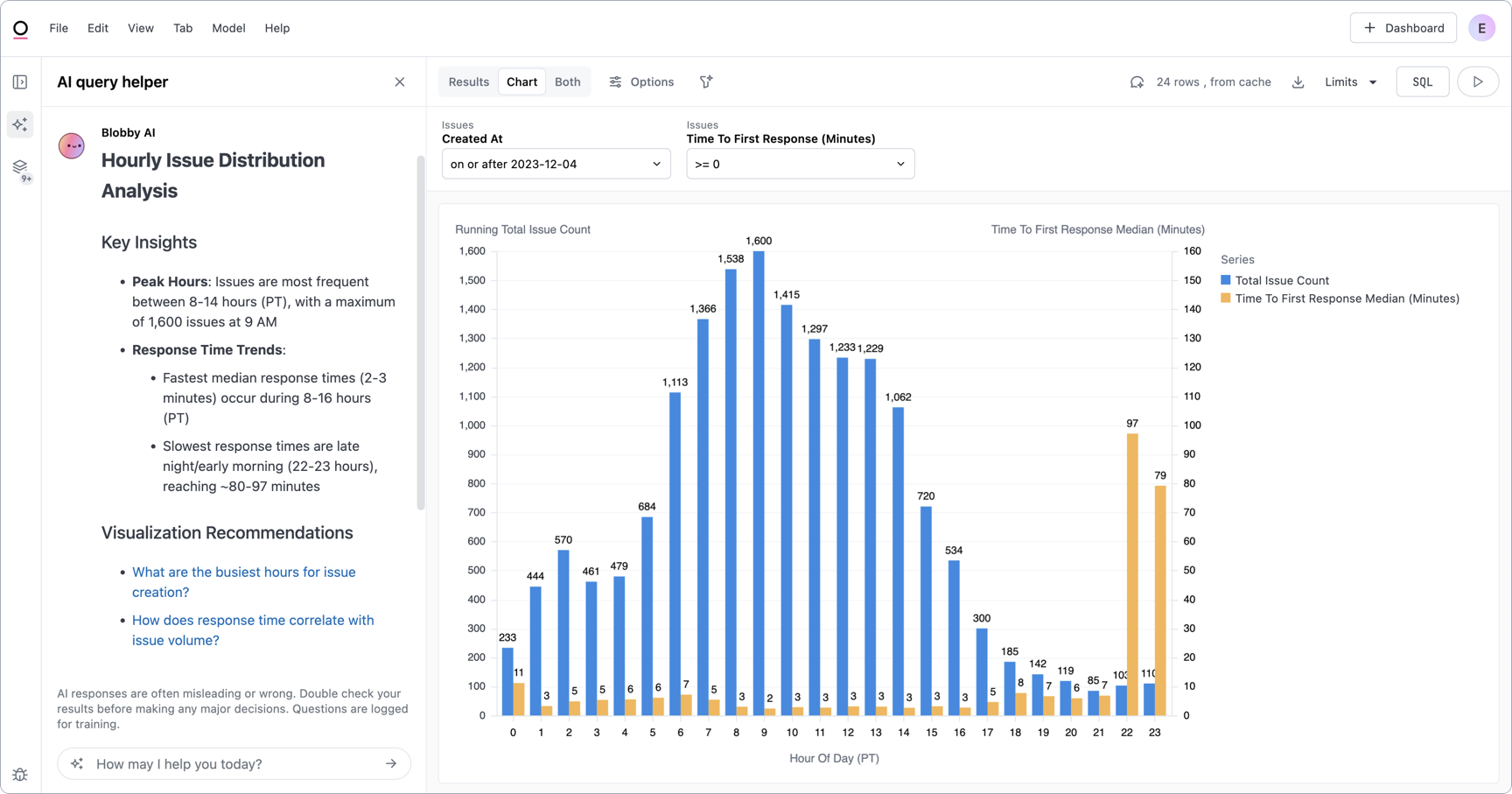

Hourly Issue Distribution AnalysisKey Insights

- Peak Hours: Issues are most frequent between 8-14 hours (PT), with a maximum of 1,600 issues at 9 AM

- Response Time Trends:

- Fastest median response times (2-3 minutes) occur during 8-16 hours (PT)

- Slowest response times are late night/early morning (22-23 hours), reaching ~80-97 minutes

- What are the busiest hours for issue creation?

- How does response time correlate with issue volume?

- Optimize support staffing during peak hours (8-14 hours)

- Investigate reasons for slow response times during late night/early morning

- Consider implementing automated first-response mechanisms during low-staffing periods

View full text response

View full text response

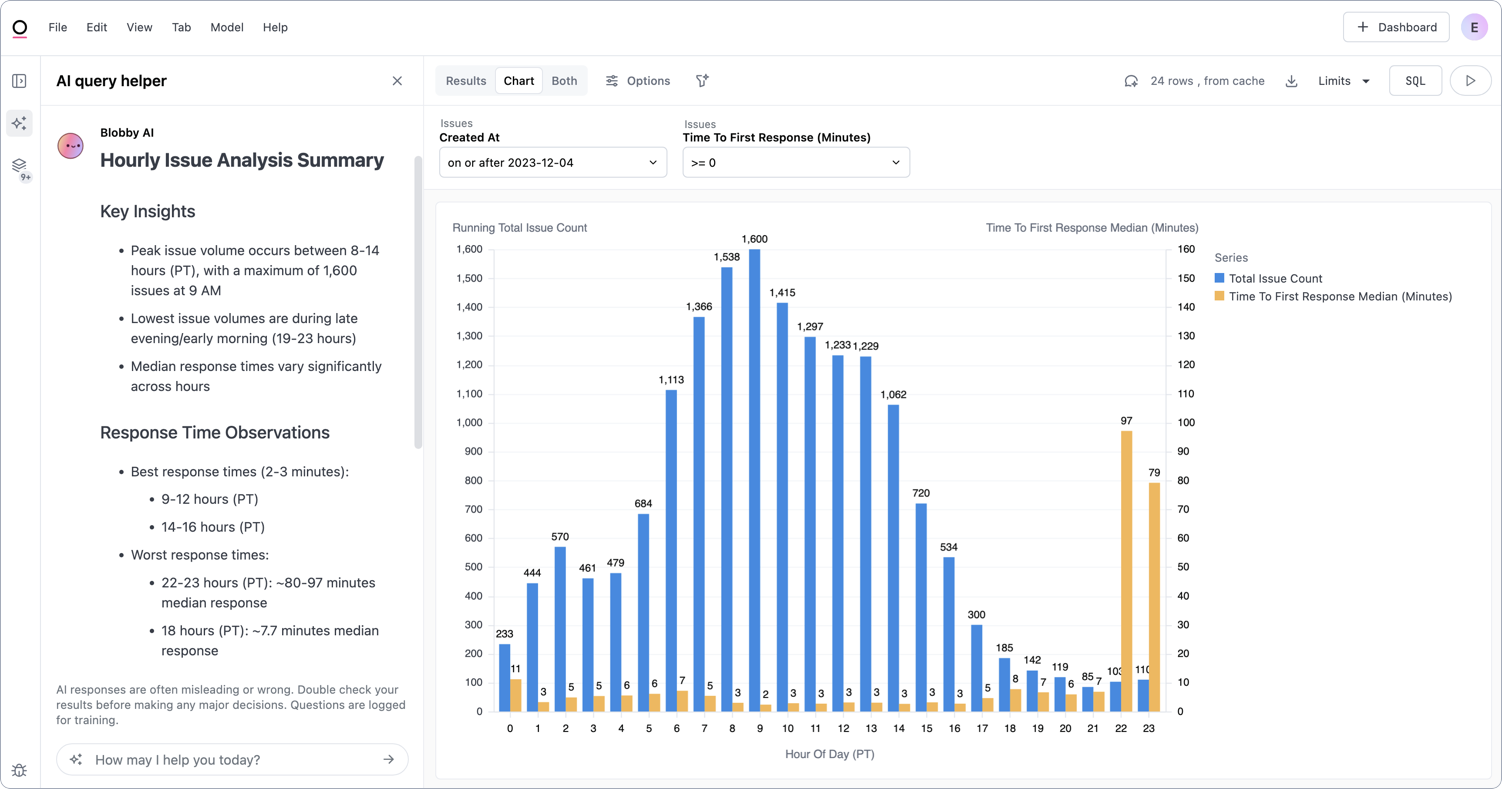

Hourly Issue Analysis SummaryKey Insights

- Peak issue volume occurs between 8-14 hours (PT), with a maximum of 1,600 issues at 9 AM

- Lowest issue volumes are during late evening/early morning (19-23 hours)

- Median response times vary significantly across hours

- Best response times (2-3 minutes):

- 9-12 hours (PT)

- 14-16 hours (PT)

- Worst response times:

- 22-23 hours (PT): ~80-97 minutes median response

- 18 hours (PT): ~7.7 minutes median response

- Investigate staffing during peak hours

- Optimize support coverage for 22-23 hour window

- Understand why 9 AM has highest issue volume

- Adjust support team scheduling

- Implement automated first-response mechanisms

- Analyze root causes of high-volume periods

Generating forecasts

The agent can generate statistical forecasts for time-series data. Refer to Generating forecasts with AI to learn more.Generating visualizations

The agent can also generate visualizations based on your query results. Refer to Generating visualizations with AI to learn more.Getting help

If you’re stuck and can’t remember how to do something in Omni, ask the Omni Agent. Questions like “How do I do [thing]?” will prompt the agent to search the official Omni docs and provide you with an answer, all without leaving your Omni workflow. You can also directly tell the agent to search the docs when researching the answer to your question.Working in an embedded context? If you have the Hide Omni watermark setting enabled to provide a fully white-labeled experience, the AI doc search feature will respect it. Omni doc links will not be returned in chat, even if explicitly requested.